Introduction

In the Codex team, the concept of specs has become much lighter. Often, documentation consists of just 10 bullet points before diving directly into development.

This change is largely related to the enhanced capabilities of the models. A few years ago, there was a lot of focus on refining prompts and making specs more complete and structured to ensure models executed tasks reliably. Now, the Codex team discusses skills more frequently. They have begun organizing common tasks into groups of callable capabilities, allowing the model to execute them.

Thus, specs no longer take center stage; skills are becoming the new entry point, and development is shifting from “describing processes” to “organizing capabilities.”

We translated the latest podcast episode, which discusses not only how they develop products but also how OpenAI’s internal understanding of coding agents, skills, and development methods has evolved alongside model capabilities.

Writing Specs? We Write About 10 Bullet Points

Peter Yang: Hello everyone, welcome to today’s show. I’m excited to invite Alex and Romain from the OpenAI Codex team. Alex is the product lead for Codex, and Romain is in charge of developer experience.

Alex / Romain: Thank you for having us, we’re glad to be here.

Peter Yang: I’m curious about how your team uses Codex for product development. Alex, do you still write specs, or do you let GPT help you with that? What does the process look like, and which model do you use?

Alex: I think we write very few specs in the Codex team now. We have a core idea of letting those “closest to the implementation” make as many decisions as possible.

We only write specs in situations where the problem is too complex for one person to grasp. Honestly, a single person can hold a lot of information now since they can delegate most coding tasks. So, the scope of what one person can accomplish is much larger than before.

However, if the task requires coordination among several people or involves particularly tricky decisions, we might write a spec. Even then, such documents are usually very short—around 10 bullet points.

Host: Can you demonstrate this? For example, can you give Codex a few bullet points, and it writes a more complete requirement or a markdown file?

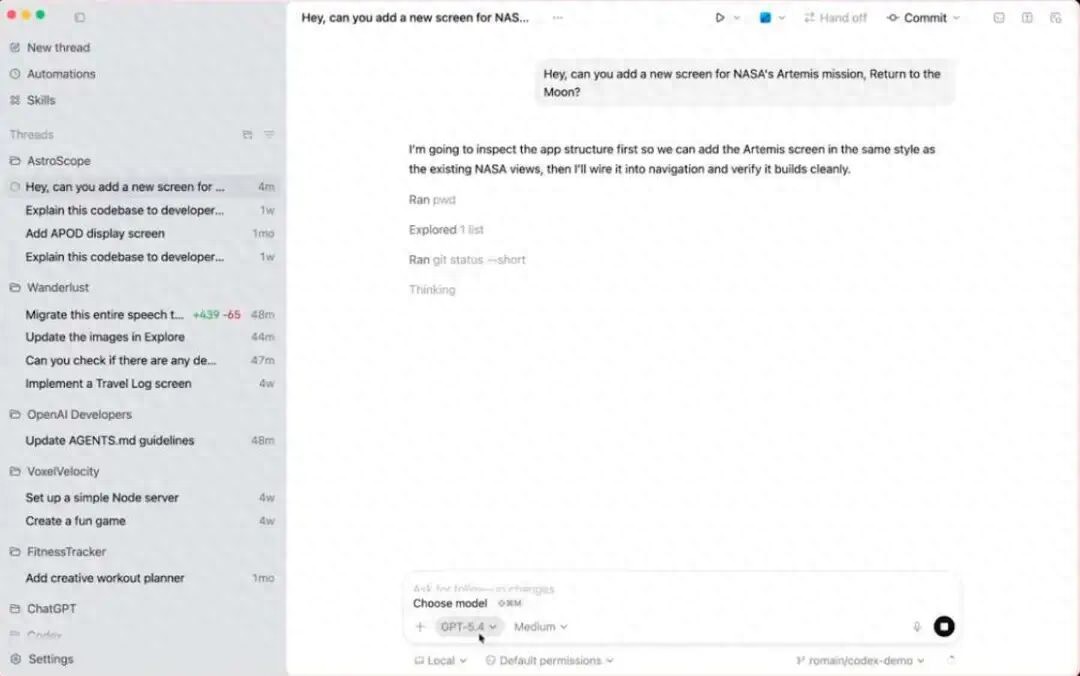

Romain: Yes, that can be done. But I want to show you a simple yet illustrative scenario. For instance, when developing an iOS app, you might just need to voice input a command like, “Help me add a new page about NASA’s Artemis lunar mission,” and send this prompt to GPT-5.4. The model will directly generate the new page for the iPhone app.

Imagine you are close to finishing a task, and new feature ideas start popping into your head, but you are unsure of the next steps.

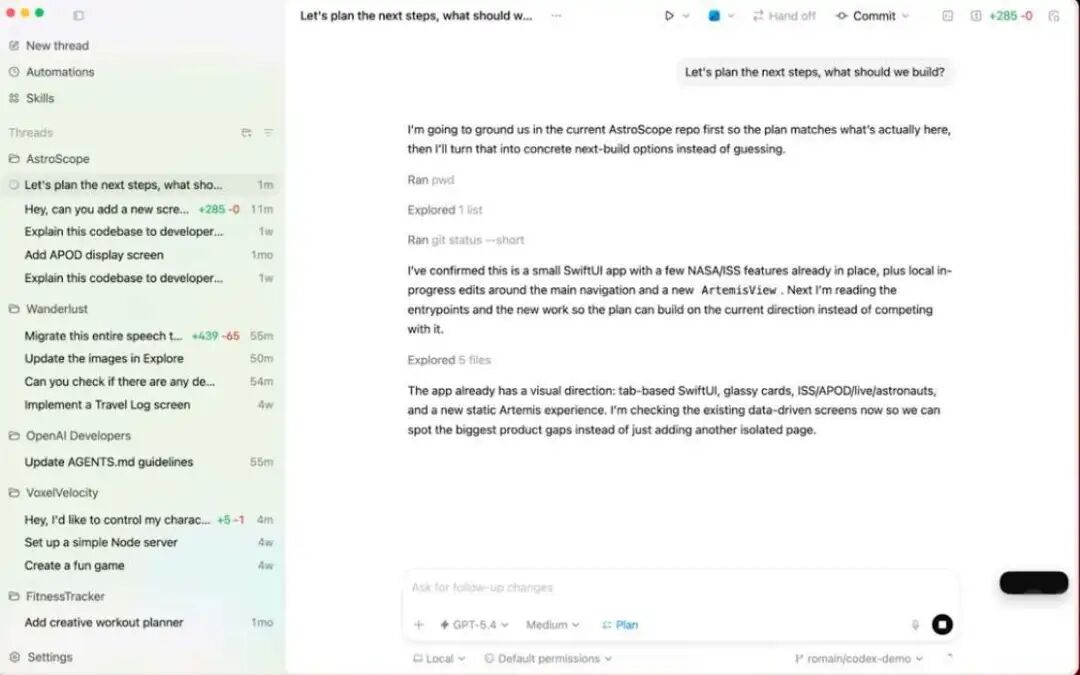

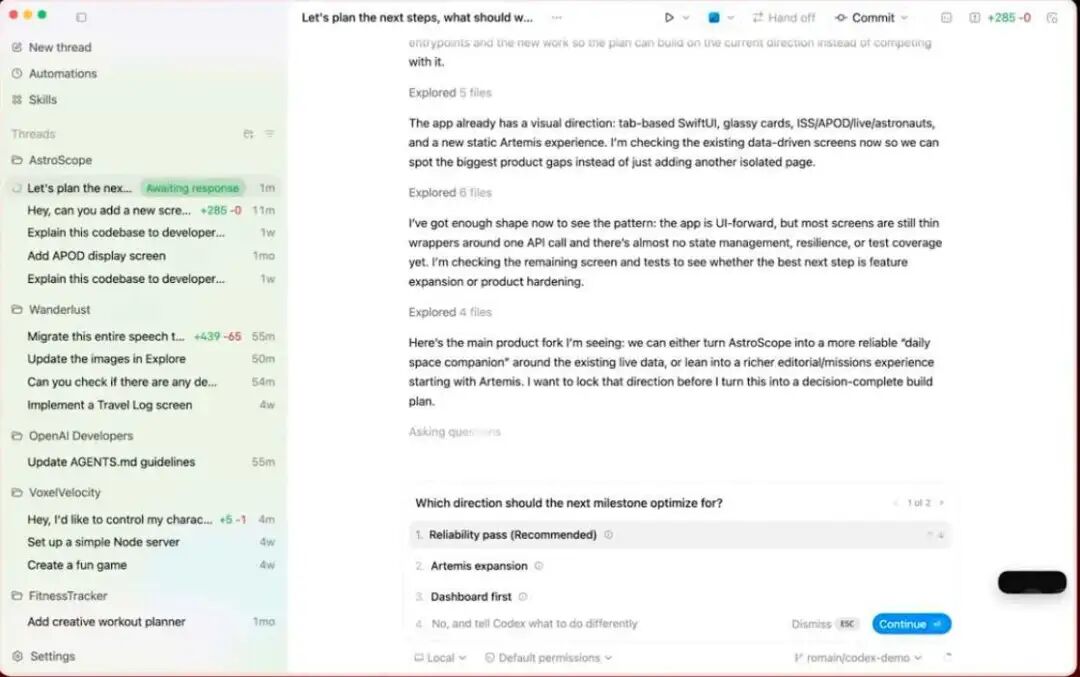

At this point, using Codex is interesting because if I say, “Let’s plan the next steps,” Codex automatically understands that I am trying to plan the content to be built next. If I press Shift+Tab, it enters plan mode. Then if I ask, “What should we do next?” I can use Codex as a brainstorming partner to plan the next steps together.

In this mode, it looks at the current code and project status, then proposes some ideas on its own. I can also add my thoughts, gradually guiding the model toward a better planning direction.

Now you can see it has started generating ideas based on the project status, code, and file content.

So that’s how I use Codex. Of course, in this demonstration, I didn’t provide much input initially. If I were Alex, the product lead, I would definitely provide more guidance upfront. But here, I intentionally let Codex propose some ideas on its own.

Alex: Many changes can actually be categorized into a few types. Some are very simple, and you just prompt it directly to make the change. Others are of medium complexity, where you might want to think about how to proceed or let it output a specific plan first.

But I often use a common approach similar to the previous example. When I have only a vague idea in my head, I open Codex and let it start thinking about “how this problem might be solved.” At this point, I don’t even have a clear feature definition. It will explore on its own and come back with questions for me.

Often, I don’t end up adopting the proposed solution because some changes may prove to be very complex. By the way, the question of “what code should PM write” is worth discussing. For me, if it’s a complex change, I don’t necessarily want to be responsible for integrating it and maintaining it long-term, but I still go through the planning mode and exploration process. This way, I develop a better mental model of what needs to be done.

In the end, I hand over the “thought results” rather than the plan itself to the engineers. I believe what’s truly valuable is often not the plan document but the understanding I form through this process.

Interestingly, our Codex team’s designers now write more code than many engineers did about six months ago. We sometimes joke that they are really impressive now. Of course, tools play a significant role in this.

The team used to joke about how few PRs I had merged in the past year. I won’t disclose specific numbers, but I admit I should have done more. Especially considering that many of those PRs were just minor changes.

However, I believe the whole issue has changed now. The focus is no longer on whether you can generate code because agents are already very capable in that regard; you can fully delegate tasks to them. What’s becoming increasingly important is deciding what to do. In other words, are we aligned in direction, and do we truly understand what this product is becoming?

After that, another equally critical question is how we ensure that the final product is of high quality. Some people proudly say that the entire app was vibe coded. For Codex, indeed, most of the code is generated by the agent. Yet, even so, we still invest a lot of effort and attention into thinking about the system itself to ensure it is genuinely high quality.

That’s why, when faced with a particularly complex feature, I usually ensure it has a more stable, long-term owner responsible for it. I don’t think PMs should own parts of such systems because PMs are often interrupted by various tasks and fill gaps. So, you wouldn’t want a PM to maintain these systems long-term.

Peter Yang: Right, you definitely wouldn’t want a PM to maintain the code for a feature. That doesn’t sound like a good idea. I think we would definitely mess it up. That’s very real. But speaking of the product itself, I do like the feel of Codex. There are other strong products out there that I also like, but many tools really require a lot of time to learn. I even feel that if I don’t browse Twitter regularly, I might not know how to use those other pro products at all. But one thing I particularly like about Codex is how easy it is to get started. The entire app is very intuitive and simple. Yet, at the same time, it has some advanced capabilities, like skills and automations. Do you use these extensively internally?

Romain: Yes, very much so. In fact, I think skills might be the most interesting type of capability in the Codex app interface.

For example, if you are working with designers using Figma, a great feature is that you can open the Figma skill, which will directly pull in details from the Figma file, including React components, variables, etc., and Codex will write the implementation based on that content.

For instance, if you are developing an app and want to share it with others or deploy it to Vercel, Cloudflare, Render, etc., these skills are already there. You just need to tell Codex what you want to do, and it can seamlessly integrate into that entire task ecosystem.

A few days ago, I was chatting with a friend who had a lot of ideas for improving a product. He told Codex to use that skill to write all those tasks into Linear so he could track them. Then, when all the tasks were listed, he said, “I’m going to sleep now; you continue to implement and check off the tasks we just discussed one by one.” The next day, he woke up to find everything was done.

OpenAI’s Changing Perspective on Codex: Open Harness and Empowering Models

Alex: Returning to the simplicity of Codex, I think sharing our design philosophy might be interesting.

One particularly fascinating aspect of product development in this field is that developers naturally love to create tools for themselves and automate workflows. Therefore, a crucial principle for us is that the product must be highly configurable.

For instance, Codex’s harness is open source. Users can dive deep and make extensive modifications. It often happens that while we are developing a feature that hasn’t been officially launched yet, people on Twitter are already complaining about it being broken. The reason is that they have gone ahead and modified the code or forked the project to use the feature early. To me, that’s one of the best parts of the product. It means that the most cutting-edge users are already living in the future with us, exploring and pulling us toward that future.

On the other hand, if you design products solely for this group, the final output can become nearly incomprehensible, and users would indeed have to spend all day on Twitter to know how to use it.

So our approach has always been to carefully define those core primitives, which are the most fundamental and critical parts of the product. Those areas require serious thought and should not be treated lightly.

We think carefully about how to make the entire product as “invisible” as possible, allowing the model to shine. This way, every time the model becomes a bit stronger, it can naturally take on more tasks. Then, on that foundation, we consider how to package it into a system that is as configurable as possible for advanced users to explore.

For example, there are already people in the community experimenting with the implementation of sub-agents. This functionality is already out there, being used and tinkered with, and we have learned a lot from how users are utilizing it. Although we are not actively pushing this feature to everyone in the product, users have discovered and started using it on their own.

Next, we will think about how to make these things easier for others. The Codex app itself is an example of this. Around the time of GPT-5.2 Codex, I remember it was around December, the model capabilities were steadily improving, but suddenly we crossed a threshold. At that point, you could delegate longer and more complex tasks to the model, and it often completed them in one go.

We began to see that many people were already using tmux. For those unfamiliar with the term, tmux is essentially a “terminal multiplexer” that allows you to manage multiple sessions, windows, and panes in one terminal, enabling you to run many tasks in parallel.

We started seeing some crazy visuals on social media, like Peter Steinberger’s image—dozens of terminal panes filling three monitors, all running various tasks with Codex.

On one hand, we were excited; on the other, we continued to ensure that this “delegated execution” capability was reliable in the most basic CLI products. However, we realized that this might be the working style of the top 1% of engineers. The question became how to make this experience intuitive enough for everyone.

Thus, the Codex app emerged. When you open it, it feels very simple, like a chat window. It helps you get things done. Then you gradually discover that there’s a sidebar, that you can run multiple tasks simultaneously, and that switching between these tasks is very easy. Soon, you feel particularly efficient. Next, you realize there’s a skills tab. We want to make this experience feel a bit like playing a game, where you discover the next capability step by step.

Romain: Absolutely. I believe from the very beginning, we’ve had a clear vision that the future of coding will increasingly become a mode of “delegating tasks to agents.”

Even a year ago, when we first started working on Codex, we envisioned a future where engineers would handle many tasks in parallel.

However, at that time, the model’s capabilities were not yet fully realized. Later, we saw the turning point with GPT-5.2 Codex and subsequent models, where the model began to work reliably and meticulously for several hours, even days. At that stage, looking back, it seemed odd to have users open a bunch of tabs in the terminal and let them run for hours.

That’s why we needed a new product form. I think the interface that later became the Codex app matured at just the right time.

Alex: Indeed, there have been two notable “atmospheric shifts” in Codex’s history.

The first was around August when we launched the cloud product for Codex. The idea itself was great, and everyone was excited then and still is. However, looking back, it was a bit premature.

Around the same time, we released the interactive programming model for GPT-5. Our thought was to address the “problems the model can now solve.” So we launched Codex CLI and IDE extensions, and growth began to explode. I remember that during those months, the scale grew by about 20 to 30 times, which was fantastic.

The second change occurred around December to January. By that time, we could finally return to the original vision of truly delegating work to the model.

We Only Do Short-Term and Long-Term Planning, Never Mid-Term Planning

Peter Yang: Let’s delve deeper into the development process of the Codex app. Did you have an annual roadmap? For example, did you write down a plan a year ago stating, “By a certain time, we will launch the Codex app”? Or did you more react to market trends and create a bunch of prototypes? How did this product come to be?

Alex: Neither. Actually, I heard a particularly good piece of advice from an OpenAI researcher, Andre. He told me that at OpenAI, you either do short-term planning or long-term planning, but you don’t do mid-term planning.

Because mid-term planning is too difficult. Short-term usually refers to the next eight weeks; that’s basically the limit. You need to think about whether there’s a specific goal that can rally the team around it to get it done. This is something we excel at in OpenAI—organizing the team around a clear objective.

The other type of planning is to grasp a longer-term “feeling.” For example, you might think that a year from now, the model will be much smarter. It sounds obvious now, and in fact, the change didn’t even take a year, but if you think back to that time, you might have thought:

In the future, we will have very powerful models, and we won’t want to “borrow our computers” for them to do tasks because that way, they can only handle one task at a time. What we really want is to have almost unlimited models working independently, validating results, deploying code, and monitoring operational status. Eventually, we might not even need to prompt them one by one.

So you start imagining an overall atmosphere and direction for that future. As for the middle layer, it becomes awkward. The so-called middle layer is usually the product roadmap, and we don’t really have a traditional roadmap.

What we truly have is a long-term direction and some specific actions we believe will push us toward that direction. For instance, regarding the Codex app, we had a strategic goal of decoupling ourselves from a “specific workspace.”

This phrase sounds a bit abstract. Let me explain. When you use an IDE like VS Code, which is my favorite IDE, you usually correspond to a specific workspace, which is a specific checked-out codebase or a whole specific folder.

Even if you use git worktree, you can essentially only open one worktree at a time. So fundamentally, you can only handle one task at a time. The same goes for CLI. But because we had that vision from the start, we wanted users to work alongside those agents running independently in the cloud, so we knew the product must eventually reach a state where you could naturally converse with multiple agents or even just one agent that orchestrates multiple agents behind the scenes.

However, we learned something: if you start from the cloud, it can be challenging for developers to derive value. Their commonly used tools aren’t there, and they have to set up the environment first. Moreover, if a task is only half completed by the model, it’s hard to get “partial results.” Often, when the model is halfway through, you need to step in to correct its direction or make slight adjustments.

So we thought we needed a local experience that would free itself from the constraints of a specific folder while still feeling natural when working across various folders on your computer.

Thus, when we began developing this app, there was a layer of abstract, even somewhat esoteric directional thinking. Meanwhile, engineers had already created many prototypes, all sorts of implementations of “I wish we had an app.” Some people made this version, others made that version. We even held a hackathon where several people independently created different versions of the app. You might have made one at that time; I can’t quite remember.

So when this project truly started, the only thing that really needed to be documented was why we believed “creating an app is a good idea.” There wasn’t a very specific spec for the app itself at first. Of course, some documentation gradually emerged during the development process, but initially, there was quite a bit of debate.

At that time, there was a real discussion: should we make an app? After all, the IDE extension was already very popular. Shouldn’t we just focus on improving the IDE extension? CLI is also important; it seems to be a core aspect of this field. If we really want to make an app, what’s the significance? Where should it go? These questions didn’t have standard answers at the beginning.

Romain: Fortunately, our IDE extension was already quite mature and polished. You could use it in environments like VS Code, Cursor, Windsurf, etc. So we brought a lot of mature experiences from the IDE extension codebase as a solid starting point.

Alex: Yes. In fact, the app and IDE extension share quite a bit of code. More accurately, they share the same portion of code.

The core harness, whether for the app or IDE extension, is written in Rust and is open source. The CLI is also based on it. So there’s a lot of sharing and a very deliberately designed layered structure.

Peter Yang: Looking back now, it seems obvious that making the app was a good idea. After all, using the Codex app is definitely easier than opening a bunch of terminal windows. But at that time, the core reason for deciding to make this app was that it is more user-friendly for beginners, and you can genuinely get started as if you were playing. Is it the best interface for managing multiple agents simultaneously?

Romain: Yes. I believe our thinking has always been very “AGI-oriented.” We have always been considering what kind of future we are sliding toward.

However, if we adjust the order, a more accurate statement would be: we first knew we had to create an interface that made “delegating tasks to multiple agents” feel very natural. Because we knew the model would eventually be ready to support this approach. In fact, we have already seen people starting to delegate tasks between different agents.

Thus, we need an interface where this process must feel natural, and when it expands to the cloud in the future, it should also be very smooth. At the same time, the entire experience must be ergonomic, not making users feel like they are awkwardly struggling with “how to delegate multiple agents simultaneously” but rather making it feel like the most natural way to work.

Romain: By the way, this experience attracts not only beginner developers. On the contrary, even within OpenAI, the most productive and experienced engineers are now using the app as their primary working method. For example, Peter, who came from OpenClaw, and Greg Brockman, are now primarily using this app to build things.

So this is fundamentally the realization of the “agent-style delegation” vision. It’s not that the best engineers will always stay in the terminal; in fact, they are also transitioning to the app.

Alex: Yes, we hope so. We keep mentioning Peter because he just joined OpenAI, and we are really excited. After all, he has worked on OpenClaw and is very creative. I’m not sure if I told you before, but last October, I took a walk with him in San Francisco.

At that time, I didn’t directly tell him we were considering making an app, but I started tentatively discussing the idea of a new interface that would make “task delegation” feel more natural. His attitude at that time was basically that he would never use such a thing.

Then last weekend, he surprisingly tweeted that this app is actually quite good. It was like seeing the sun rise in the west. He has started to like it.

Peter Yang: I’ve also spoken with Peter. If you really get him to start using the app, that would be a major achievement because he usually opens twenty terminal windows at once. That’s really impressive. Alex, you seemed to be the only PM for Codex for a long time, right? How many people are on the Codex team now? Fifty? A hundred?

Alex: It’s roughly in that range. About that. I think we were around eight people last May, right?

Romain: Yes, about that.

Alex: I can’t recall the exact number now, but we have indeed grown very quickly since then. So now we are probably between fifty and a hundred people.

After the Model Strengthens, Codex Takes Over Everything with Skills

Peter Yang: So what does a typical day look like for you? Do you even have a “typical day”?

Alex: Interestingly, I’ve been thinking about this question lately because I realized I don’t really have a straightforward answer. I later realized that my work state actually switches between different modes.

First, let me clarify that this isn’t advice for others; it’s just my personal work style. For example, before we released the app, I was in a very pure execution mode. In that state, I was fully focused on execution, obsessing over quality, ensuring we didn’t overlook any corners, and getting every little detail right.

In this mode, I spent a lot of time in Codex. On one hand, we indeed use Codex extensively to understand what’s happening. For instance, I would use Codex to check Slack for feedback; I would have Codex summarize this content, follow up, and then send it to Linear. So, just understanding the current quality status requires a lot of use of Codex.

On the other hand, I also use Codex to understand code-level issues and directly make modifications with it. Because now, if it’s just a small change rather than building a new system, letting it help me finish the task, testing it, and submitting a PR is often faster than communicating with someone else and having them prioritize this task among a thousand other things—especially when our goal was to release the app within two weeks.

Besides these, there are certainly many very “human” aspects, like motivating and mobilizing everyone, while also maintaining a critical perspective on what we are doing. So this is a work mode I can clearly perceive. Interestingly, if I’m in this mode, you’ll find that I tend to be more active on Twitter. I don’t know why, but whenever you ask me about social aspects, I usually find myself browsing Twitter more during that time.

But I also have another mode. For example, I currently feel very strongly that we have reached a stage where the model is very strong; GPT-5.4 is astonishing. At the same time, the product form of the app is more popular than we expected, and we have now covered all platforms, including Windows.

So my focus has shifted to thinking about “what should we do next” and understanding the current state of the whole situation.

This feels more like a coordination mode. In this mode, I actually spend less time writing code in Codex and more time using Codex for communication. So at least for me, I can distinctly feel that I have these two modes. There might be more than two, but at least these two are the most obvious.

Peter Yang: How much cross-functional alignment do you typically need to do?

Alex: The Codex team itself is fantastic. We actually do very little cross-functional alignment internally. We somewhat intentionally see ourselves as a “pirate ship” team.

Even within the Codex team, it’s just me, along with two recently joined PMs and a few leads. Until recently, everyone basically shared the load together. Our work style is more like a group of people mixing together to push things forward quickly rather than doing a lot of formal alignment.

So, there isn’t much alignment within the team. However, it’s becoming increasingly clear that building Codex involves constructing a coding agent. Now everyone can see that coding agents are not only useful for writing code but also for many other types of work.

We’ve seen many people using the Codex app for tasks beyond just coding. Furthermore, now most people at OpenAI are using the Codex app, even those not in technical roles. I see this app everywhere in the company.

So when you realize that Codex is not just serving coders but is becoming useful in a broader context, it indeed requires more cross-functional alignment. Because OpenAI also has ChatGPT, which is a product used by many, we need to think carefully about how to approach this.

Romain: From the developer experience perspective, we have almost become an extension of the Codex team. Most of our energy is now focused on Codex, but there are several reasons for this.

On one hand, of course, it’s an exciting product, and developers genuinely love using Codex, so we will continue to improve it. On the other hand, as Alex mentioned, we also have different modes. For instance, when preparing for a release, we rush to the front lines with the Codex team, preparing release assets, various materials, and thinking about how to present Codex’s value maximally. Once the product is out, we switch to another mode, educating developers on how to use Codex in various ways.

But there’s another layer of reason that makes this particularly important for us. When you look at the larger OpenAI platform, you’ll find that millions of developers are building things based on the OpenAI API. They are using models and various modalities, from image generation to Sora, and speech to speech.

And you know what? The best entry point for developers has now become Codex. If you turn the clock back to a year ago, or even just back to last summer when we launched GPT-5, we needed to write a lot of guides to teach people how to prompt GPT-5 because it was a reasoning model, quite different from GPT-4.

But now our approach has changed. Even for these use cases, we try to teach developers to directly use Codex and skills. For example, if you need to update an integration, you should most likely use Codex along with the corresponding skill, and Codex can usually help you handle that.

From this perspective, our work has also become very cross-functional because we see Codex as the cornerstone of the entire developer platform.

Alex: One more interesting point is how we collaborate with each other. Honestly, one of the best parts of working on Codex is the community. This includes both the online internet community and the people we meet at offline events. Many things we organize revolve around this core.

For example, we pay great attention to the release rhythm, when to launch new things; we also value feedback greatly. When the community starts providing feedback, we quickly fix issues and communicate. So our entire team is very “online,” always keeping an eye on community trends.

Take the release of the Codex app, for instance. We collaborated very closely with the Dom team. He essentially helped us coordinate a wide-ranging alpha test covering many users. We were building the product with these users, gathering feedback, supplementing skills, enhancing the capabilities used in the app, and preparing documentation, etc.

So I think this is a unique advantage of the Codex team. Ultimately, it’s because we are open source. Because we are open source, many things naturally evolve into being very open about what we are doing. And the community indeed rewards this openness.

We even have Codex ambassadors spread across many cities and countries who organize local events to teach people in their communities how to use these tools. Of course, I wish I could visit every city, but that’s clearly unrealistic. So seeing the community being so energetic and passionate, proactively organizing events, hackathons, and building things together is truly wonderful.

“Lobster” Will Be Integrated into ChatGPT

Peter Yang: Next, let’s talk about Peter. I consider myself an early user of OpenClaw. It does have some rough edges and minor issues, but it has genuinely helped me accomplish many tasks. For instance, a few days ago, because it remembers our previous conversations, it gave me a rather crude but motivational “spiritual pep talk” lasting about three minutes. Honestly, that might be the most insightful thing I’ve heard from AI. So I’m curious about how you are integrating Peter into the team? Also, does this vision of a “personal agent” relate to what he is currently working on? How do you understand this?

Alex: There are actually two layers to this. I can’t say too much, but the first point is that he is a super, super heavy user of Codex. OpenClaw was largely built using Codex, so he continuously provides feedback to the team and actively participates in efforts to improve Codex. In a way, this is his “side job,” but he is indeed doing it, and we are very excited about it.

As for the other part, I can’t say too much yet. But broadly speaking, he is indeed helping us build the next generation of personal agents, and it is being integrated into ChatGPT.

Romain: One thing that fascinates me about Peter is that, of course, I’ve known him for a while, and many people saw a glimpse of the “future” when they first played with OpenClaw.

But the truly impressive part is that Peter recognized this vision early on. If you look back at 2025, he worked on over 40 open-source projects last year, but these projects were all centered around the same vision: I need a command-line interface to access my calendar, I need a command-line interface to access my tweets and Gmail.

By continuously working on these projects, he has concretized a vision—one that revolves around skills and command-line tools, building what we use today for coding agents. In the future, it clearly won’t stop at coding agents; it will evolve into various types of personal agents.

Thus, Peter is very well-suited to provide us with feedback throughout this process, as many of the tools that have entered the open-core ecosystem were built by him.

Peter Yang: I feel the same way. Romain is right; he’s a one-man show who has built a fantastic open-source community. And honestly, it’s made me less inclined to open other apps. Now I just talk to my little bot, and it’s completely different.

Alex: Wait, what have you connected it to? Have you connected it to everything?

Peter Yang: Pretty much. I’ve connected it to a lot of things. It can see my banking information, YouTube data, and I’ve connected it to voice, calendar, and various Google services. Sometimes I lie in bed talking to it, and my wife asks who I’m talking to, and I say I’m talking to my OpenClaw bot. It keeps giving me ideas. However, there are indeed many people out there charging for “helping people set up OpenClaw,” with prices even reaching $5,000. So if you can really make this a product for the general market that ordinary people can use smoothly, that would be enormous.

Alex: Yes, we are working on it. I will update you later.

The Traditional Career Ladder is Becoming Less Relevant

Peter Yang: Alright, let’s wrap up with some more provocative topics, Alex. Maybe I’m mistaken, but I think I’ve seen you say that many teams no longer need as many PMs. Let’s spice this up a bit. What do you think, brother? Do we still need PMs?

Romain: I think the most astonishing thing about these tools is that the changes they bring are even more profound than just the question of whether we need PMs or not.

In my view, the boundaries between almost all career ladders are starting to blur. It used to be that designers were over here, engineers were over there, and PMs were in another place, with some kind of ideal structure in terms of headcount.

But now, if you are an engineer, you will obviously become more efficient; if you are a designer, you suddenly gain some “superpowers” to become more technical; if you are a PM who primarily wrote strategic documents before, now you can directly create prototypes.

This doesn’t mean you have to be responsible for a feature aimed at a billion users, but you can certainly showcase a slice of that vision to the team by “doing it yourself.” So I think the most captivating aspect is that the lines between all career ladders are becoming blurred, and we are all becoming builders.

Alex: I resonate with this. I try to recall what I’ve said. I remember saying something online along the lines of if a startup has fewer than 20 engineers but already has a PM, that might be a warning sign.

But what I meant to express is quite similar to what you just said. Now the boundaries of all roles are mixing together. Designers can do more engineering work, engineers can do more design, and PMs can do more building work.

Moreover, many engineers didn’t take on task triage or project management roles largely because they had to spend their time writing code. But now that writing code is much easier, you can let agents like Codex analyze feedback and prioritize tasks, freeing up everyone’s time.

So I believe that, to some extent, everyone can do a part of each other’s work. Scott Belsky has a saying called “talent stack collapse,” which I really like, and I believe it is indeed happening.

I have a strong view that when fewer people are needed in a room to do something, things usually get done better, and decisions become purer.

The next question is, if that’s the case, what remains for PMs? I think many PMs should transition. For example, if you are a PM but have always wanted to be an engineer, perhaps you were good at coordinating people but lacked strong engineering skills, now you might want to become an engineering manager instead. With coding agents, this can absolutely work, and it might be a cleaner, more natural role for you.

The same logic applies to another type of PM; perhaps they actually want to do design, and now they should get closer to design and building. But ultimately, the most critical factor is interest. Interest and initiative may be the two most fundamental and important qualities for people in the AGI era.

So I ultimately think about the question very simply. If you inherently prefer writing code, and you’ve only been a PM because “someone has to do it,” then you should delete your old self and directly become an engineer, doing the same things in an engineering manner. The same goes for design.

But if what you genuinely enjoy is spending time with users, even if it takes you a bit away from building, or if you particularly like observing the market and predicting where it will go, then in a sufficiently large team, if there are enough engineers, I believe the PM role can still have space. But ultimately, it depends on what you truly want to do.

To add one more point, I still believe that every problem domain needs a human responsible for it, but I no longer think that person necessarily has to be a PM.

Peter Yang: I feel the same way in my team. I think the best engineers never come to me asking, “Peter, what should we do next?” They go directly to talk to users, figure out what needs to be done, and then come back to discuss with me. It seems like many teams are moving in that direction; everyone is on the same page. The Codex team should be similar, right?

Alex & Romain: Many of the features used in the Codex app today were proposed by engineers themselves because they wanted those features. Indeed, many have come this way. But I also want to say that I particularly appreciate a type of engineer who enjoys spending time with users and thinking about what should be done.

At the same time, there is another equally strong type of engineer who is incredibly fast, excels at building systems, and thinks deeply but has no interest in chatting with users. I believe such individuals also have ample space.

This is precisely my fundamental view of the AI world. Each of us can become more “truly ourselves.” Do you understand what I mean? Just be yourself. AI and your surrounding team will cover the parts you don’t want to handle.

Peter Yang: That’s a great statement. However, I still feel that the label of “builder” is extremely important. Because I feel that every PM is expected to become a leader by default, and the logic of traditional career ladders is that you eventually need to become a VP or something, and then you no longer have time to build things yourself. You spend your entire day in product reviews, giving feedback here and there. I believe many PMs don’t want to become that way. At least I don’t want to. I want to remain close to users when a product is actually released.

Alex: I completely agree. Honestly, I never see PMs as leadership positions. I prefer to understand it as a role that fills in the gaps. Sometimes this role does require some leadership, but even then, that kind of leadership is more about helping everyone align rather than being the genius strategist who proposes the only correct direction.

However, one thing I can say for sure is that the best PMs at OpenAI are deeply involved in the front lines. And because of that, if you join OpenAI in a senior leadership role, it can be quite challenging because there’s still a strong need for you to dive into the details.

So you need to find a way to balance high-level responsibilities while still being genuinely engaged at the front lines. Personally, I believe the best way to join here is always to dive into the front lines.

What Does the Codex Team Look for When Hiring? It’s Not Your Resume

Peter Yang: Last question. You finally hired another PM. When you’re looking for members for the Codex team, aside from requiring them to be heavy users of Codex, what other traits do you value? What kind of people are you looking for?

Alex: We can both answer this question. I’ll go first. I’ve already mentioned this once before; I would return to that word: initiative.

Ultimately, “people who take the initiative” are the most important, both at OpenAI and especially in the Codex team. We intentionally do not structure the team in a way where, once you join, someone says, “Here are 12 tasks, increasing in difficulty; do them in order.”

Here, it’s more like, you come in. Alright, welcome aboard. That’s it. After that, it’s up to you.

So I particularly value those who are self-starters, proactive, energetic, and have ideas about which things are worth pursuing. Another important trait is that they are not afraid to propose differing opinions simply because existing ideas are in place. Because honestly, many of our existing decisions might have been made under certain random circumstances and are probably not right.

To idealize it further, if a person can actively absorb additional responsibilities and is willing to take on those that are still unclear and undefined, I would consider them almost the perfect teammate.

So these are the core and uppermost standards I believe are essential. If you just ask what role fits best here, my answer remains that any technical role, especially in engineering, is suitable.

Romain: I agree. From my side, in terms of developer experience, I usually look for high-initiative people, and they also need to be very technical, preferably already adept at using tools like Codex.

But beyond that, I particularly value a certain passion—whether you genuinely want to spend time with developers and builders and are willing to share your knowledge and experiences.

For instance, this week we just announced that Thomas will be joining my team this month. He’s the one who created the open-source Codex Monitor. I’m very pleased about this because he is a highly creative, productive person who is also very good at using Codex, but he also loves to share how he uses Codex to build things.

What we genuinely want to do is bring millions of developers into the new future represented by Codex. I believe agentic coding is fundamentally changing our understanding of software, applications, and product development.

There’s so much potential to show the world that anyone can build anything, and we can guide them through the process. So that’s probably the type of person I’m looking for.

Alex: Let me see if I understand correctly. In my mind, the definition of the DevX position is roughly: a very strong engineer who also excels at using Twitter.

Romain: You’re right about half of it; I need to add a footnote. Here, the term “good at Twitter” more accurately means “skilled at communicating with our community.”

Because if you go to some places in the world, you’ll find that many developers don’t use Twitter that frequently. For example, in Europe and some other regions, people use LinkedIn or other platforms more. So we need to clarify that what’s truly important is being able to communicate effectively on social media globally.

So it can be summarized as: you must be adept at social media. This point is definitely important. I also genuinely enjoy spending time teaching and doing educational things.

Peter Yang: I feel that whether a person has initiative can often be seen even before the formal interview, right? For example, do they consistently post online? Do they have side projects?

Alex: Absolutely. So if someone messages me expressing interest in collaborating, my first reaction is actually: does it have a link? As long as there’s a link, I usually click it.

Of course, I might first check if the link is ridiculous, but honestly, I almost always click it. I’m just curious. Then if they casually attach a paragraph of their thoughts in the message, I usually read it carefully.

As for the next statement, I’m not sure if it sounds a bit harsh, but if someone sends me a long explanation of “why I’m interested in this position” along with a resume, I tend to pay less attention to that than to “their thoughts” and “what they have done.” What I really want to see is what you thought and what you did.

And just the other day, someone asked me this question, and I suddenly realized that I didn’t even know where many people graduated from.

Peter Yang: Who cares? Really. Who cares about that? I’m actually quite glad we live in an era where many of those past silly credentials are no longer as important. Who cares about prestigious schools or degrees? Just show me what you’ve done.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.